AI Is Causing a Major Energy Crisis! How To Navigate?

Artificial intelligence is often framed as a computer problem. But increasingly, it is becoming an energy problem.

Across the world, AI data centers are pushing electricity demand to levels utilities did not anticipate. For nearly two decades, electricity demand in many developed markets barely grew. Now, it is rising again, largely driven by data centers and AI workloads.

For tech leaders, this shift matters more than most realize. The constraint on the next generation of AI may not be chips, models, or talent. It may be power.

Understanding this emerging “AI power crisis” is essential for anyone building or scaling technology businesses.

The Hidden Cost of the AI Boom

Training and running modern AI systems requires enormous computational power. That computation is concentrated in large-scale data centers filled with GPUs operating around the clock.

The electricity footprint is staggering.

According to IEA, data centre demand is expected to more than double to about 945 terawatt-hours (TWh) by then, which is slightly above the electricity consumption of Japan today

Globally, data center electricity demand is projected to more than double between 2022 and 2026, surpassing 1,000 terawatt-hours. Much of this growth is directly linked to AI workloads.

IEA also shows that data centres accounted for 1.5% of electricity demand worldwide in 2024 – a share set to rise to about 3% by 2030.

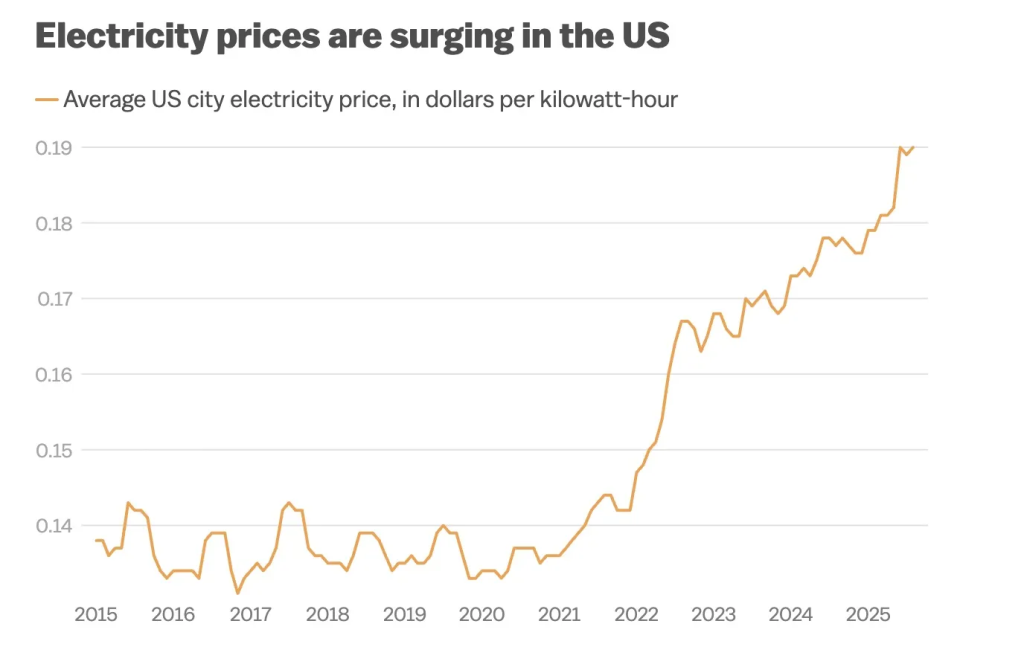

Why Electricity Bills Are Rising

Utilities build infrastructure based on expected demand growth. Historically, that growth was modest, around 1–2% annually.

AI is breaking those assumptions.

In some regions, electricity demand is rising 10% or more per year due to data centers and industrial electrification.

To keep up, utilities must invest billions in:

- new power plants

- transmission lines

- grid upgrades

These infrastructure costs rarely stay isolated to the companies driving demand. They often spread across the entire ratepayer base.

That is why electricity prices in the U.S. have increased more than 30% since 2021, with AI-related demand contributing significantly to the trend.

Source: WIRED

Studies now estimate that AI-driven data center expansion could push household electricity bills 8% higher nationwide by 2030, and up to 25% in certain regions.

In other words, the AI boom is quietly becoming an energy affordability issue.

The Grid Was Not Built for AI

The core challenge is timing. Power infrastructure evolves slowly. Building new generation capacity or transmission lines can take 5 to 10 years due to regulatory approvals and construction timelines.

AI infrastructure evolves much faster. A hyperscale data center can go from planning to full operation in just a few years. When several launch in the same region, they can overwhelm local grids almost overnight.

That type of volatility signals a deeper problem: the digital economy is scaling faster than the physical infrastructure that powers it.

The Strategic Risk for Tech Companies

Many technology leaders still treat energy as an operational detail. That assumption is becoming risky.

Power availability increasingly affects:

1. Data center location decisions

Regions with limited grid capacity may block or delay new facilities.

2. Infrastructure costs

Energy pricing volatility directly impacts cloud operating costs.

3. Regulatory scrutiny

Governments are beginning to question whether communities should subsidize AI infrastructure.

4. ESG commitments

Massive energy consumption puts pressure on sustainability targets.

5. AI scaling limits

In some regions, the grid itself may become the bottleneck for new compute clusters.

Simply put, the AI race is also becoming an energy race.

What Forward-Thinking Tech Leaders Are Doing

The companies moving fastest in AI are already adapting their strategies. Several approaches are emerging.

1. Direct energy partnerships

Some technology firms are signing long-term power agreements or investing directly in energy generation, including nuclear and advanced renewables.

2. Demand-response systems

Large data centers can temporarily reduce compute loads during peak grid stress to avoid blackouts and stabilize supply.

3. Geographic diversification

Rather than concentrating infrastructure in traditional data center hubs, companies are expanding into regions with surplus energy capacity.

4. Efficiency improvements

Advances in cooling, chip design, and workload scheduling can significantly reduce energy consumption per AI task.

These solutions will not eliminate the power challenge. But they can mitigate it.

The Bigger Shift: Energy Is Now a Tech Strategy

For decades, computing was constrained by hardware innovation. Today, that constraint is shifting. The next generation of AI systems may require orders of magnitude more compute, and therefore energy.

Energy is no longer just a utility bill. It is becoming a strategic resource.

Tech leaders who understand this early will have an advantage. Those who ignore it may find that their biggest limitation is not algorithms or GPUs, but the electricity needed to run them.

WRITE A COMMENT