What Is Agent Harness? What is Code Harness?

Everyone’s adding AI agents to their engineering pipelines. But most teams are still asking the wrong question: “which model is best?”, when the smarter question is: what’s holding that model in place? Agent harnesses and code harnesses are the quiet infrastructure deciding whether your AI investment ships results or spins in place. Here’s what they actually are, how they differ, and why getting this right may be the biggest leverage point in enterprise AI right now.

The model is not the product

There’s a tempting assumption baked into how most leaders think about AI: that better models equal better outcomes. Pick the strongest LLM, plug it into your workflow, and watch the results improve. In practice, that’s rarely how it plays out.

Consider what Stripe revealed in mid-2025: their engineering team was shipping roughly 1,300 AI-written pull requests every week. The model they used wasn’t custom, it was a standard frontier LLM. What made the difference was the surrounding architecture, a purpose-built system they called “Minions”.

Around the same time, OpenAI documented a three-engineer team producing a million-line codebase at 3.5 pull requests per engineer per day, with zero manually typed code. The method? Harness engineering.

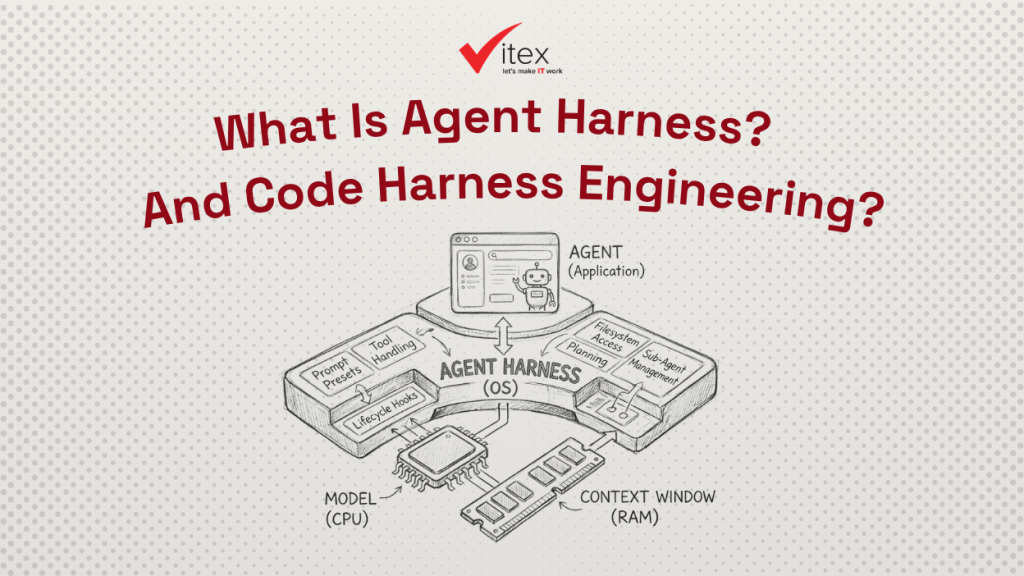

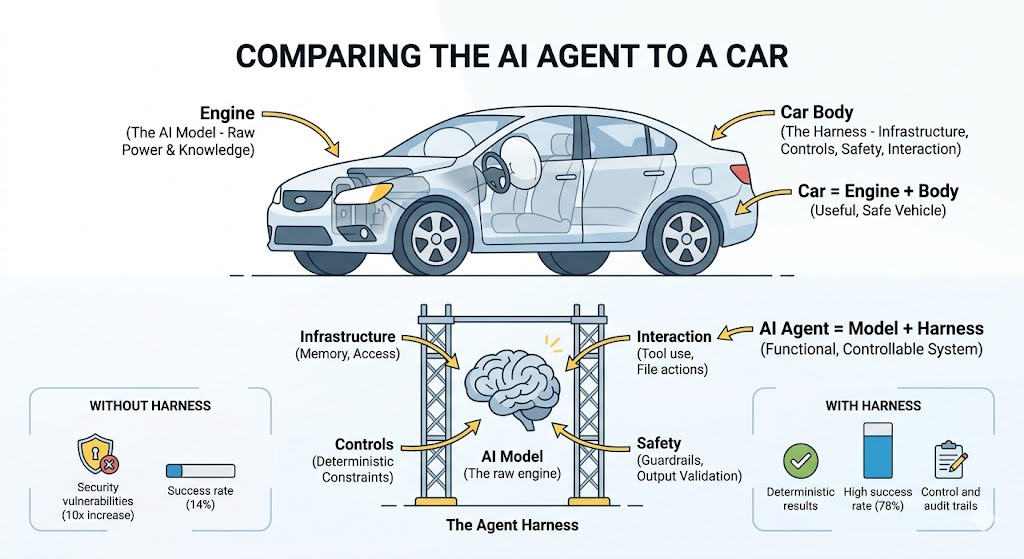

The model is the engine. The harness is the car. A great engine without steering, brakes, and a road to drive on goes precisely nowhere useful.

| 1,300 AI pull requests per week at Stripe | 14% AI success rate on complex tasks without harness structure | 10× increase in AI-generated security findings without guardrails (mid-2025) |

What is a code harness?

A code harness (more formally called a test harness) has been around long before AI entered the picture. At its core, it’s a controlled environment that wraps around software to run automated tests reliably, repeatedly, and at scale.

Think of it as a testing workbench. It includes the machinery to invoke components, feed them inputs, simulate missing dependencies (like a payment gateway that isn’t live yet), compare outputs against expected results, and report what passed or failed.

The business value is straightforward: bugs caught before deployment cost a fraction of bugs caught in production. Code harnesses make it feasible to run thousands of tests automatically after every code change, something no team can do manually. They also enable teams to test individual components in isolation, even when the rest of the system isn’t ready yet.

What is an agent harness?

An agent harness is different and newer. Where a code harness wraps around tests, an agent harness wraps around an AI agent itself. It’s the scaffolding that controls how an LLM (like Claude, GPT-4, or Gemini) interacts with the real world.

A raw language model is, at its base, a text predictor. It doesn’t inherently know how to open a file, call an API, run a test suite, or submit a pull request. The harness gives it those capabilities, and just as importantly, controls what it can and can’t do.

The term “harness” is intentional here. In engineering, a harness implies constraint as much as capability. The goal isn’t just to give an AI agent more tools, it’s to keep it from going off the rails when no human is watching.

A production-grade agent harness typically handles: what tools the agent can access, how memory and context are managed across long tasks, what the agent is not allowed to do (guardrails), how outputs are validated before anything ships, and how the system logs what happened for audit purposes.

How they relate and why the distinction matters

The simplest framing: a code harness tests software. An agent harness runs AI. But they’re increasingly interconnected, especially in engineering teams deploying AI coding agents.

| Code harness | Agent harness |

| Testing infrastructureWraps around your software to validate it. Automates test execution, catches regressions, and reports results. Exists to verify that code works as expected. | AI execution infrastructureWraps around an AI model to give it real-world capabilities. Controls what it can access, validates its outputs, and keeps it from producing unpredictable side effects. |

Martin Fowler’s 2026 piece on harness engineering offers a useful shorthand: “Agent = Model + Harness.” Everything in an AI agent except the model itself (the tools, the memory, the guardrails, the orchestration) lives in the harness. The harness is where your business logic, your security rules, and your quality standards actually get enforced.

There’s a strategic implication here: the harness is your competitive moat. Models are becoming commodities, and a better model ships every few months. Teams that invest in strong harness design can swap models freely, the tool integrations, memory architecture, and business logic stay intact. Teams that don’t are locked into rebuilding every time the model landscape shifts.

The Cost Of Skipping This

If this sounds like an engineering concern, not a leadership one, consider what happens when harnesses are absent or weak. Apiiro’s September 2025 analysis found that AI-generated code introduced over 10,000 new security vulnerabilities per month by mid-2025 across studied repositories, a tenfold increase from December 2024.

Without a harness, telling an AI agent to “follow our coding standards” is just a prompt. It’s probabilistic, the agent might comply, or might not. A harness enforces deterministic constraints: a linter blocks the PR, a gate stops the deploy. The distinction isn’t philosophical; it’s the difference between hoping your AI behaves and ensuring it does.

Research from LangChain’s 2024 State of AI Agents report found that without proper evaluation and test infrastructure, general-purpose AI agents achieved only 14% success on complex end-to-end tasks, compared to 78% for humans on the same tasks.

What this means for your team

Whether you’re deploying AI coding agents, customer-facing AI, or internal automation, the same principle applies: the infrastructure around the model shapes results more than the model itself.

For your engineering leaders, that means treating harness design as a first-class engineering discipline, not an afterthought. For you as a business leader, it means asking harder questions when evaluating AI vendors and internal initiatives: What guardrails are in place? How are outputs validated before they reach production? What happens when the model fails?

The EU’s AI Act, effective 2024, already classifies many enterprise AI deployments as high-risk and mandates lifecycle risk management and accuracy standards. A well-designed harness isn’t just good engineering, it’s increasingly a compliance requirement.

Teams who get this right – like Stripe, Spotify (which merged 1,500+ AI-generated PRs since mid-2024 through careful verification loops), and Google aren’t winning because they have better models. They’re winning because they built better harnesses.

In practice, 70% of companies currently use hybrid models combining onshore oversight with offshore development, achieving 20–40% cost improvements while maintaining project control.

If your team is scaling AI agents, the most important investment you can make right now isn’t a better model, it’s better infrastructure around the one you have. Understanding harness design is where that starts.

WRITE A COMMENT